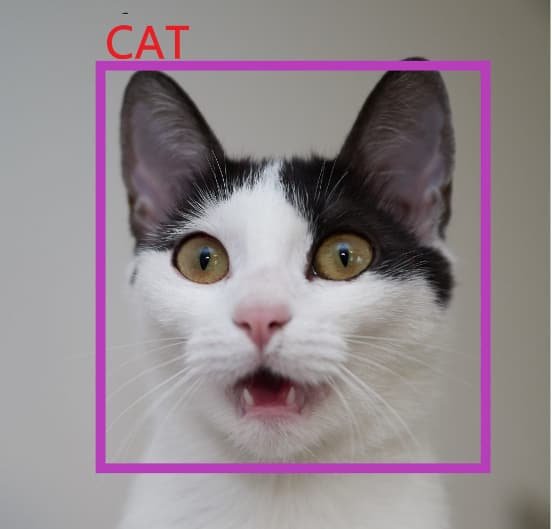

Object detection is a computer vision technique in which a software system can detect, locate, and trace the object from a given image or video. The special attribute of object detection is that it identifies the class of objects (person, table, chair, etc.) and their location-specific coordinates in the given image. The location is pointed out by drawing a bounding box around the object. The bounding box may or may not accurately locate the position of the object. The ability to locate the object inside an image defines the performance of the algorithm used for detection. Face detection is one of the examples of object detection.

These object detection algorithms might be pre-trained or can be trained from scratch. In most use cases, we use pre-trained weights from pre-trained models and then fine-tune them as per our requirements and different use cases.

Object Detection using YOLO algorithm

How does Object detection work?

Generally, the object detection task is carried out in three steps:

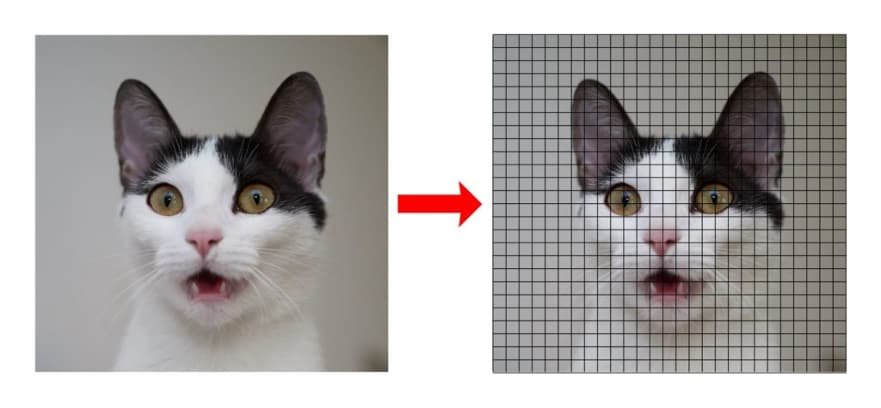

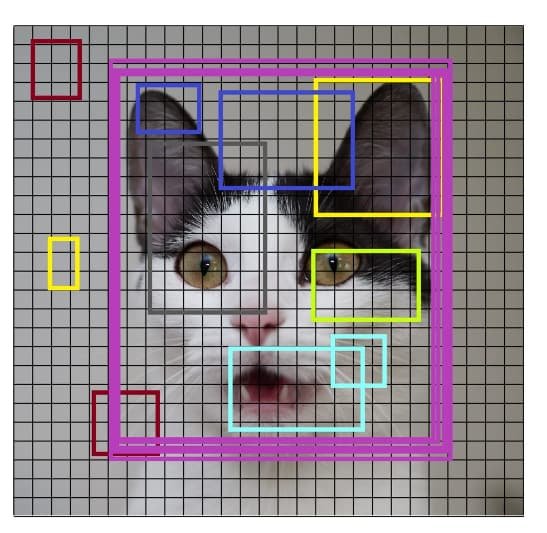

Generates the small segments in the input as shown in the image below. As you can see the large set of bounding boxes are spanning the full image.

Feature extraction is carried out for each segmented rectangular area to predict whether the rectangle contains a valid object.

Overlapping boxes are combined into a single bounding rectangle (Non-Maximum Suppression).

Optical character recognition

OCR stands for "Optical Character Recognition." It is a technology that recognizes text within a digital image. It is commonly used to recognize text in scanned documents and images.

Many OCR implementations were available even before the boom of deep learning in 2012. While it was popularly believed that OCR was a solved problem, OCR is still a challenging problem especially when text images are taken in an unconstrained environment.

I am talking about complex backgrounds, noise, lightning, different font, and geometrical distortions in the image.

It is in such situations that the machine learning OCR (or machine learning image processing) tools shine.

Challenges in the OCR problem arises mostly due to the attribute of the OCR tasks at hand. We can generally divide these tasks into two categories:

Structured Text — text in a typed document. In a standard background, proper row, standard font, and most dense.

Unstructured Text — text at random places in a natural scene. Sparse text, no proper row structure, complex background, at a random place in the image, and no standard font.

Datasets for unstructured OCR tasks

There are lots of datasets available in English but it's harder to find datasets for other languages. Different datasets present different tasks to be solved. Here are a few examples of datasets commonly used for machine learning OCR problems.

SVHN dataset

The Street View House Numbers dataset contains 73257 digits for training, 26032 digits for testing, and 531131 additional as extra training data. The dataset includes 10 labels which are the digits 0-9. The dataset differs from MNIST since SVHN has images of house numbers with the house numbers against varying backgrounds. The dataset has bounding boxes around each digit instead of having several images of digits like in MNIST.

Scene Text dataset

This dataset consists of 3000 images in different settings (indoor and outdoor) and lighting conditions (shadow, light, and night), with text in Korean and English. Some images also contain digits.

Devanagri Character dataset

This dataset provides us with 1800 samples from 36 character classes obtained by 25 different native writers in the devanagri script.

And there are many others like this one for Chinese characters, this one for CAPTCHA, or this one for handwritten words.

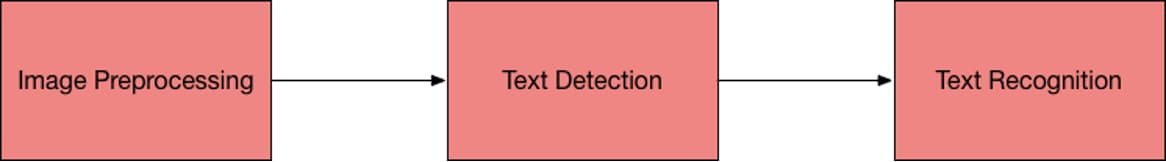

Any Typical machine learning OCR pipeline follows the following steps:

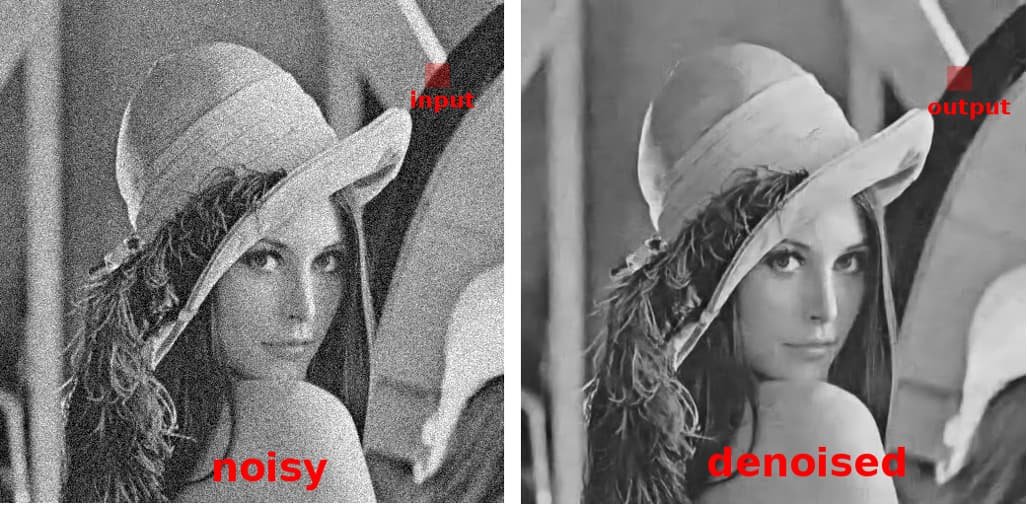

Preprocessing

Remove the noise from the image

Remove the complex background from the image

Handle the different lighting conditions in the image

Text Detection

Text detection techniques are required to detect the text in the image and create and bounding box around the portion of the image having text.

Sliding window technique

The bounding box can be created around the text through the sliding window technique. However, this is a computationally expensive task. In this technique, a sliding window passes through the image to detect the text in that window, like a convolutional neural network. We try with different window sizes to not miss the text portion with different sizes. There is a convolutional implementation of the sliding window which can reduce the computational time.

Text Recognition

Once we have detected the bounding boxes having the text, the next step is to recognize the text. There are several techniques for recognizing the text.

CRNN

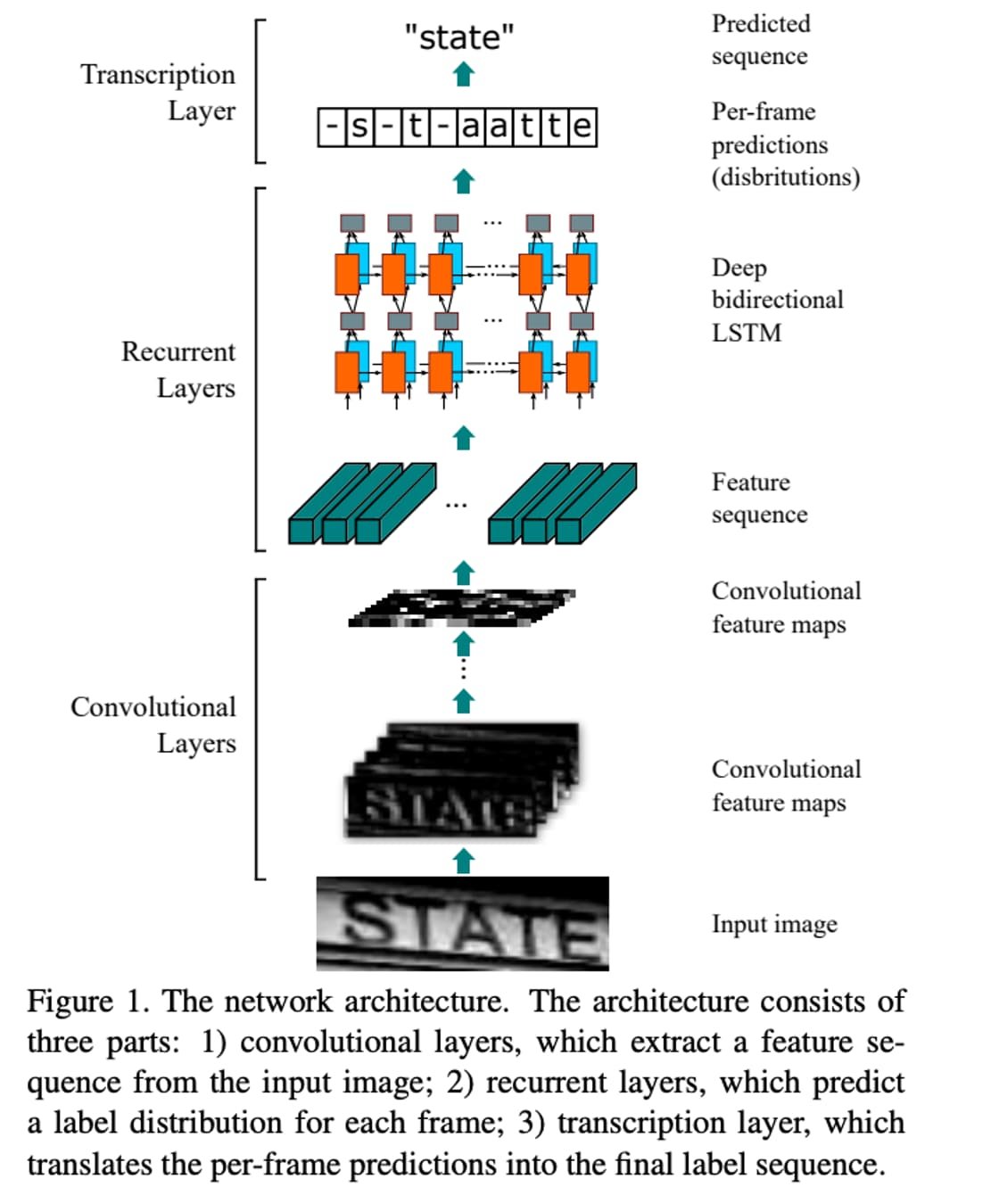

Convolutional Recurrent Neural Network (CRNN) is a combination of CNN, RNN, and CTC (Connectionist Temporal Classification) loss for image-based sequence recognition tasks, such as scene text recognition and OCR. The network architecture has been taken from this paper published in 2015.